Introduction

In today’s fast-paced technology landscape enabling developers to take ownership of their infrastructure is crucial for efficient development and deployment processes. In this blog post we will discuss how Tatari’s SRE team has empowered developers by moving deployments and configurations closer to the developers, allowing them to take ownership of their applications while maintaining centralized control over global resources.

CI -> CD at Tatari

At Tatari we firmly believe that the default building block of a modern cloud architecture starts with the container image. Once Continuous Integration (CI) checks are complete, the container image is uploaded to our AWS container registry. Argo CD monitors for new images tagged with a SEMVER pattern which triggers deployment into our various environments.

Decentralizing Deployments and Configuration

To give developers ownership of their infrastructure we began decentralizing deployments and configurations by moving them to their respective repositories. This approach enables developers to manage their applications’ desired state in a declarative manner which promotes consistency and reduces human error.

In our implementation we co-located Helm charts with their corresponding application code and migrated infrastructure specific Helm charts to a dedicated repository. This streamlined infrastructure management process allows developers to have better control over their deployments and rapidly iterate on their applications.

Centralizing Global Resource Management for Enhanced Consistency and Control

Empowering developers with control over their infrastructure is vital, but it is equally important to maintain centralized management of top-level resources to ensure consistency across all clusters. By centralizing configuration, we can promote a unified approach to resource management and guarantee adherence to best practices across the organization.

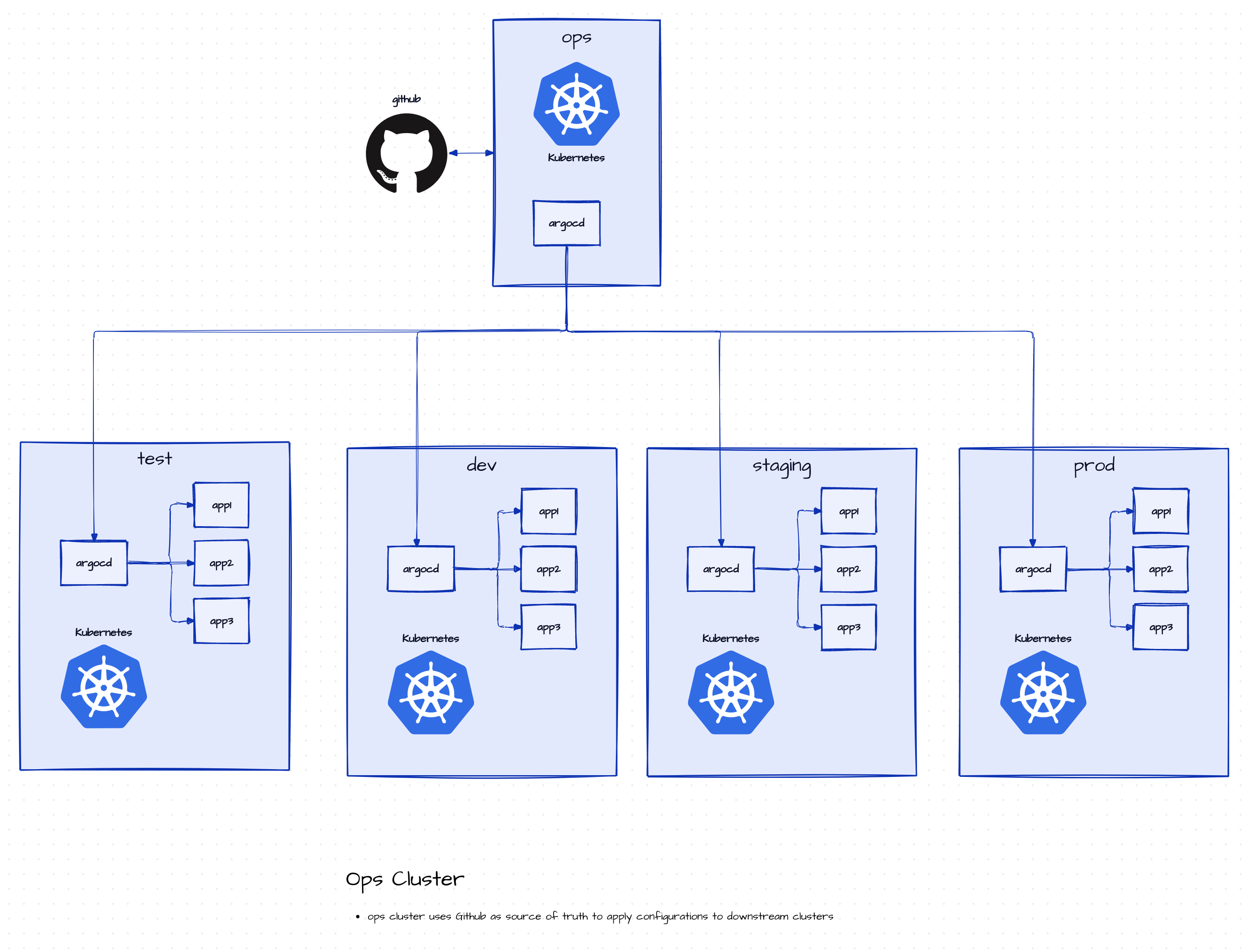

To strike the perfect balance, we have established an operations (ops) cluster responsible for managing infrastructure services and applications. Utilizing Terraform, Argo CD, and Kubernetes, we have successfully integrated and managed global resources while maintaining consistency across clusters. This approach allows developers to concentrate on their applications without worrying about infrastructure management.

One significant advantage of this centralized approach is the “single pane of glass” for monitoring and management. This provides clear visibility into the status of all resources while separating Kubernetes core deployments from the “applications” that developers will manage. A few exceptions for apps deployed on the ops cluster are also considered, ensuring comprehensive control and management of resources throughout the organization.

Using Argo CD for GitOps-Based Management

Argo CD is an open-source, Kubernetes-native Continuous Delivery (CD) tool that automates the deployment and synchronization of applications to Kubernetes clusters. By continuously monitoring a Git repository for changes and automatically applying those changes to the target Kubernetes cluster, Argo CD simplifies the management and deployment process.

In our implementation we installed Argo CD in our Kubernetes (K8s) ops cluster as an ApplicationSet, which served as the centralized control point for managing Argo CD instances in all other clusters. This approach enabled GitOps based management of infrastructure applications across multiple clusters. By automating the deployment and synchronization of applications in each K8s cluster based on changes made to the Git repository we reinforced our GitOps practices and streamlined the application deployment process across the entire organization. This “Argo of Argos” setup not only improved consistency but also allowed us to maintain a unified approach to resource management while empowering developers to own their infrastructure.

Migrating Vault to Kubernetes and Enhancing Security

To further enhance our security posture we migrated our Vault instances from EC2 to our K8s cluster as part of our Argo of Argos (detailed above). This migration allowed us to better align our infrastructure management with modern best practices and improve the security of our secret management system. By making vault a first-class citizen in our container infrastructure, we can better take advantage of our service mesh while making security updates and software upgrades relatively painless.

We took the opportunity to upgrade from Vault Key/Value (KV) Secrets Engine v1 to v2. Vault KV v2 offers more granular control over data vs metadata ACLs and enables secret versioning which allows for historical access to secrets and rollback in case of human error. We’re excited to be able to leverage the capabilities of Kubernetes authentication with secret management while better positioning our security posture to align with our container oriented architecture.

Note: We haven’t fully removed v1 mounts yet, but this is the plan in the near future.

Conclusion

By empowering developers to own their infrastructure while maintaining centralized control over global resources we have successfully balanced flexibility and consistency in our infrastructure management. The adoption of Kubernetes, Terraform, and Argo CD, along with the migration of Vault to our K8s cluster, has enabled our team to enhance security, streamline deployments, and ultimately foster a more efficient development environment.

Special Thanks

Members of the SRE Team helped execute these improvements and collect the talking points for this blog post. Without them, none of this would be possible.

- Patrick Shelby

- Calvin Morrow

- Dan Mahoney

- Keegan Ferrando

- Stephen Price

- Margot Maxwell

References:

- https://sre.google/

- https://semver.org/

- https://www.weave.works/technologies/gitops/

- https://continuousdelivery.com/

- https://developer.hashicorp.com/vault/tutorials/secrets-management/compare-kv-versions

- https://helm.sh/docs/intro/using_helm/

- https://kubernetes.io/docs/concepts/

- https://www.terraform.io/use-cases/infrastructure-as-code